-

Notifications

You must be signed in to change notification settings - Fork 55

New issue

Have a question about this project? Sign up for a free GitHub account to open an issue and contact its maintainers and the community.

By clicking “Sign up for GitHub”, you agree to our terms of service and privacy statement. We’ll occasionally send you account related emails.

Already on GitHub? Sign in to your account

Module重构讨论 #335

Comments

|

我怎么感觉两个方案没什么大的区别,而且怎么解决了参数层层传递的问题,这个cfg不也是要层层传? |

呃,区别就是一个需要一个ModuleBase,一个不需要,解决结果就是只在目前的每个layer中传递一个cfg,其他的多出来的参数就不需要传递了,比如mlp中需要新增act、bias、dropout这3个参数的话就只需要加在cfg中,而不需要分别在mlp,transformer,bert中多加3个parameter的位置 |

原来是这个意思,那是不是最后参数位置放一个 |

就是libai的参数一般写在config文件里,所以放到cfg中传递比较好,**kwargs也可以,但是需要在模型中传? |

|

感觉也是,那还是传cfg好些 |

|

这里提供另外一种解决方案 类似于D2的结构, 在model中也使用lazyconfig进行构建. model = L(RetinaNet)(

backbone=L(FPN)(

bottom_up=L(ResNet)(

stem=L(BasicStem)(in_channels=3, out_channels=64, norm="FrozenBN"),

stages=L(ResNet.make_default_stages)(

depth=50,

stride_in_1x1=True,

norm="FrozenBN",

),

out_features=["res3", "res4", "res5"],

),

in_features=["res3", "res4", "res5"],

out_channels=256,

top_block=L(LastLevelP6P7)(in_channels=2048, out_channels="${..out_channels}"),

),

head=L(RetinaNetHead)(

# Shape for each input feature map

input_shape=[ShapeSpec(channels=256)] * 5,

num_classes="${..num_classes}",

conv_dims=[256, 256, 256, 256],

prior_prob=0.01,

num_anchors=9,

),

anchor_generator=L(DefaultAnchorGenerator)(

sizes=[[x, x * 2 ** (1.0 / 3), x * 2 ** (2.0 / 3)] for x in [32, 64, 128, 256, 512]],

aspect_ratios=[0.5, 1.0, 2.0],

strides=[8, 16, 32, 64, 128],

offset=0.0,

),

box2box_transform=L(Box2BoxTransform)(weights=[1.0, 1.0, 1.0, 1.0]),

anchor_matcher=L(Matcher)(

thresholds=[0.4, 0.5], labels=[0, -1, 1], allow_low_quality_matches=True

),

num_classes=80,

head_in_features=["p3", "p4", "p5", "p6", "p7"],

focal_loss_alpha=0.25,

focal_loss_gamma=2.0,

pixel_mean=constants.imagenet_bgr256_mean,

pixel_std=constants.imagenet_bgr256_std,

input_format="BGR",

)这么做的好处就是, 但是这么做的缺点也比较显而易见. 对libai的改动比较大, 几乎��有底层的layer和config.model都需要重构 |

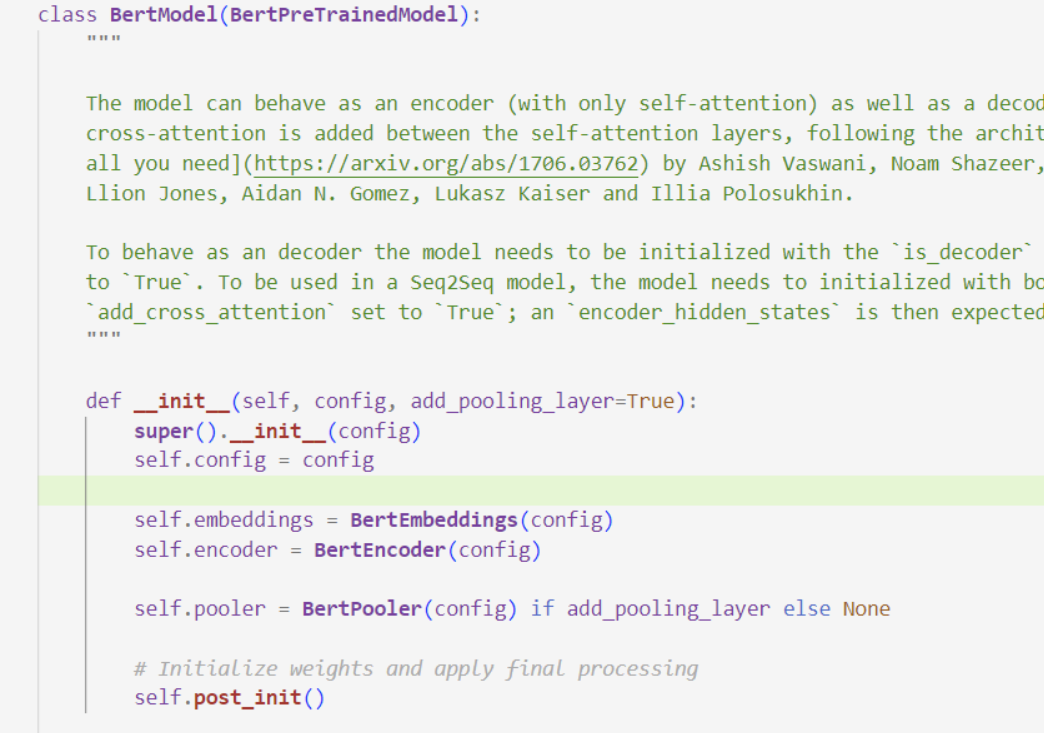

关于解决libai中参数层层传递的问题讨论,主要思路是让内部Module直接获取参数,不通过外部传递:

简单写了一个demo,可以直接放到libai下跑。

创建一个ModuleBase基类:

ModuleBase的方案的代价是需要每个layer和model继承,然后多出一个cfg parameter。但是现在暂时感觉ModuleBase的存在用处不大(还需要讨论),所以下面是不用ModuleBase的demo,这个方案的代价只是多出一个cfg parameter。

The text was updated successfully, but these errors were encountered: